The US government and Anthropic, which holds a lucrative defense contract, are locked in a bitter dispute over how the military should be able to use the company’s AI models. Dario Amodei, Anthropic’s CEO, has insisted that the company’s models not be used for autonomous weapons or mass surveillance of Americans—uses that the Pentagon says are not contemplated or would be illegal. Negotiations over how to salvage the $200 million contract for classified work grew heated this week, with Amodei saying “we cannot in good conscience” accept the Pentagon’s assurances.

When the Friday afternoon deadline for reaching an agreement came and went, the standoff escalated. President Trump ordered all federal agencies to stop using Anthropic’s artificial intelligence products within six months, calling it “a radical left AI company run by people who have no idea what the real world is all about.” Secretary of War Pete Hegseth went further, designating Anthropic a supply chain risk to national security, which means no other company that does business with the US military could use its products.

Many employees of Anthropic and its competitors across Silicon Valley have cheered the company’s stand as a victory for AI ethics.

AI ethics are important. Guardrails are, too. But AI ethics aren’t the only vital interests at stake here. The ethics surrounding national defense, democratic accountability, and the power of unelected corporate leaders are, too.

There is a serious ethical question about whether one company, elected by nobody, with its own normative agenda as well as substantial global investors and customers, should be dictating the conditions of the most essential government role: protecting the lives of Americans. Indeed, one could argue that Anthropic could pose a long-term risk to the United States because there is no assurance that the company would put American lives ahead of other lives or considerations.

American voters have no say in how the company makes its decisions, including its ethical guidelines. Anthropic’s shareholders, on the other hand, do have a say—and their original funders are the leaders of the not-so-ethical and oh-so-nutty billionaire-backed Effective Altruism movement.

By contrast, government oversight regimes in all three branches provide accountability and recourse for government actions, including military actions, that voters find troubling or unlawful. The system often works slowly and poorly—but that’s democracy in action. Indeed, in the actual case of NSA warrantless surveillance after 9/11, the public reacted and Congress changed the rules substantially. That’s public accountability. And that’s how we as a nation adapt to changing threats and responses.

Anthropic provides none of that kind of democratic accountability. Instead, it lets one billionaire have an extraordinary say in our nation’s defense that should give anyone concerned about democratic accountability pause.

Safeguards exist

Anthropic’s public positioning makes for good public relations and appeals to a left-leaning constituency, but it grossly misstates the facts.

The specifics matter. The Pentagon has never said or indicated it intends to violate existing laws or policies about “mass surveillance” of Americans. In the real world, the Pentagon does not run general domestic policing of our fellow citizens. Its “surveillance” capabilities are directed abroad, at terrorists and foreign adversaries that threaten our lives and interests. The Pentagon’s most controversial intelligence organization is the National Security Agency, which is responsible for collecting foreign signals intelligence (including e-mail and telephone calls) as well as making and breaking codes for things like nuclear weapons. Note the word “foreign.”

NSA collects intelligence about Americans in rare circumstances, under constrained statutory authorities, using extensive procedures to protect American civil liberties, and with oversight by all three branches of the federal government. When controversies and concerns erupt, NSA activities and authorities are debated in Congress and revised if necessary.

It’s Congress’s job to set the boundaries. The job of intelligence agencies is to work right up to those boundaries to protect the United States—getting “chalk dust on our cleats” as former CIA and NSA director Mike Hayden liked to say.

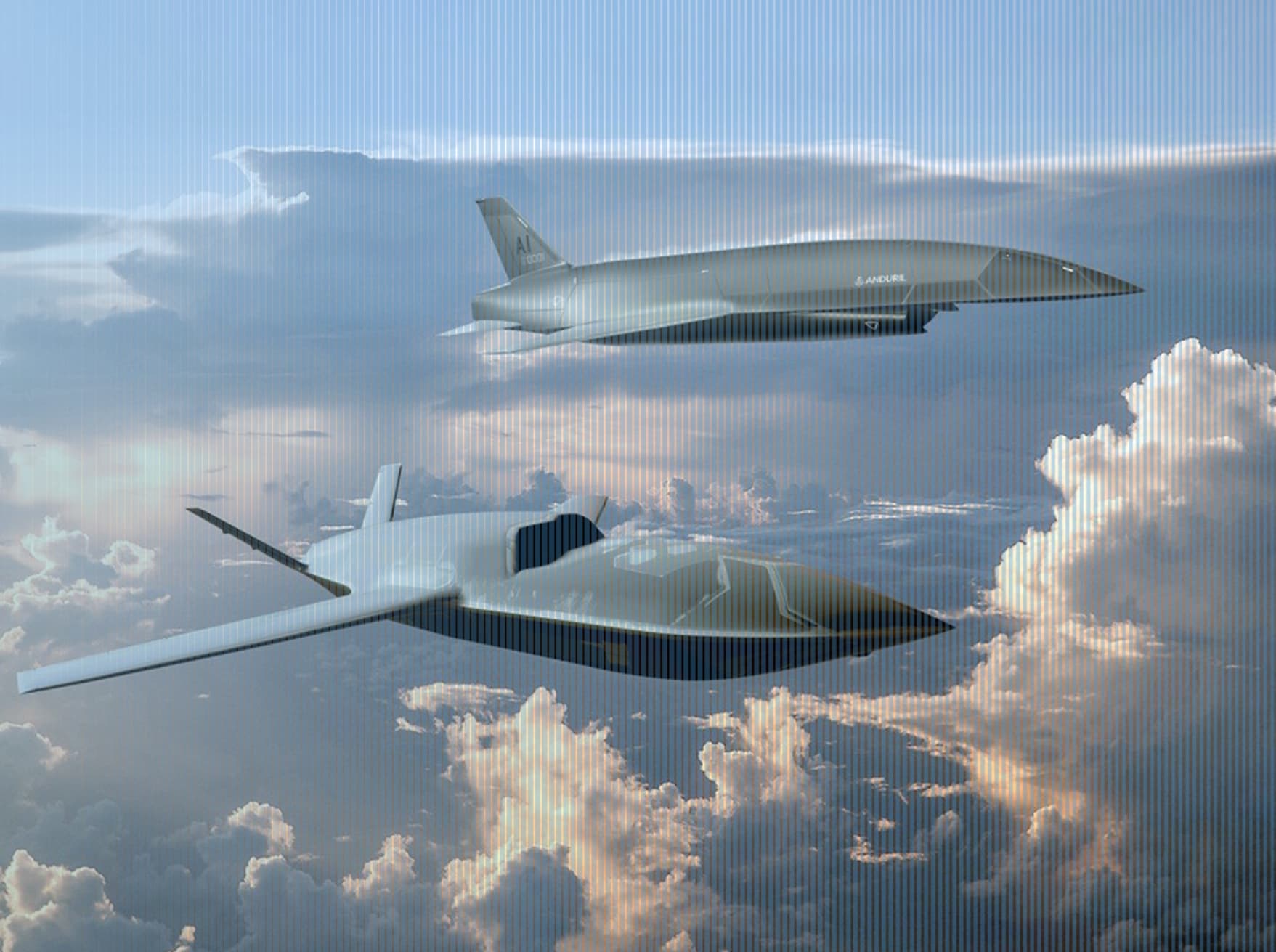

Reasonable people disagree about how US intelligence agencies should operate. Debates about the actions of secret agencies in a democratic society are essential. We must have them. But these debates should be adjudicated through democratic channels—not software license negotiations.It’s also important to take seriously what autonomy means—the risks and rewards—in a military context. For example: dogfights between AI-piloted F-16s and human-piloted F-16s show the AI fighter plane wins. This should not be a surprise. We know machines react faster than humans in all sorts of scenarios.

Insisting on a “human in the loop” in all circumstances for all time sounds reassuring, but it shouldn’t be. It means the United States potentially increases the chance of losing in future combat against AI-enabled adversaries, even for purely defensive actions like intercepting an incoming nuclear missile autonomously.

Anthropic is certain of its moral high ground—but the future of military conflict doesn’t work that way. Nor is there any guarantee that Anthropic won’t change its mind one way or the other on what is considered “safe” use of AI in warfare. In fact, Anthropic scaled back its support of AI safety this past week in the face of increasing economic competition, after years of preaching about AI safety to burden competitors with AI safety requirements.

I also note that the Pentagon has had relevant ethical guidelines for more than a decade (the Department of War takes this very seriously); that the deal notes Anthropic AI is to be used “for all lawful purposes”—it’s not carte blanche; and that the United States has used weapons that go boom without a human in the loop for a long time. They’re called mines.

A supplier’s veto

Then there’s the potential precedent of giving the leader of one defense supplier the power to direct how its products could be used (after agreeing to a lucrative contract). This is akin to a company that makes a specialized bolt for the F-35 demanding a veto over how the aircraft is used in war—on the theory that only it understands the dangers of its own handiwork.

A Rawlsian veil of ignorance thought experiment would ask: would you want that power for suppliers of other products that can be used in ways we might not foresee? What if a company’s “ethical guidelines” do not accord with national values? In all cases, do you think giving this kind of power to a company makes the nation more safe or less safe?

The United States is entering an era in which AI firms are developing capabilities that are central to intelligence, logistics, cyber operations, and weapons systems—all outside the government. That reality brings extraordinary opportunity. It also brings extraordinary concentration of power.

We can debate how autonomous future systems should be. We should. We can debate how surveillance authorities should evolve. We must.

But we should not sleepwalk into a world where ultimate decisions about the use of national defense capabilities migrate from democratic institutions to corporate boardrooms.

That prospect should make all of us—left, right, and center—deeply uneasy.

Amy Zegart is the Morris Arnold and Nona Jean Cox Senior Fellow at the Hoover Institution. The author of five books, she specializes in US intelligence, emerging technologies, and national security. At Hoover, she leads the Technology Policy Accelerator and the Robert and Marion Oster National Security Affairs Fellows Program. She also is an associate director and senior fellow at the Stanford Institute for Human-Centered AI; a senior fellow at the Freeman Spogli Institute; and professor of political science (courtesy) at Stanford University. Her research includes the leading academic study of intelligence failures before 9/11: Spying Blind: The CIA, the FBI, and the Origins of 9/11 (Princeton, 2007) and the bestseller Spies, Lies, and Algorithms: The History and Future of American Intelligence (Princeton, 2022), which was nominated by Princeton University Press for the Pulitzer Prize.